I listen to a few podcasts regularly as a part of my daily routine while walking my dog or doing chores. The podcasts that I personally listen to are not movie podcasts but they mention movies almost every episode. I love the hosts and love to keep up with their recommendations, but don’t want to write down every time they mention a movie, because I can almost guarantee that I’m doing something else while listening.

I decided to do something about it and create a tool for exactly this purpose. Since I’m a full stack software and cloud engineer, I took it too far and now have to write about it.

I built deepod.io, a site that tracks movies mentioned in podcasts. I started with the podcasts that I listen to regularly, Evil Men and The Ben & Emil Show, and have begun to expand to other podcasts. Setting up the two initial podcasts showed me some constraints in terms of cost and rate limiting, (specifically with LLM calls and transcription) so I have modified the extraction pipeline to work without my intervention, and to cost me as little as possible. (It still costs me a bit, more on that later)

Here, “extraction” means turning a podcast transcript into a structured list of movie mentions (and matching them to TMDB when possible).

Check it out and if you’re into movies, podcasts, or podcasts about movies, let me know if there’s something you’d be interested in seeing on Deepod.

The Pipeline

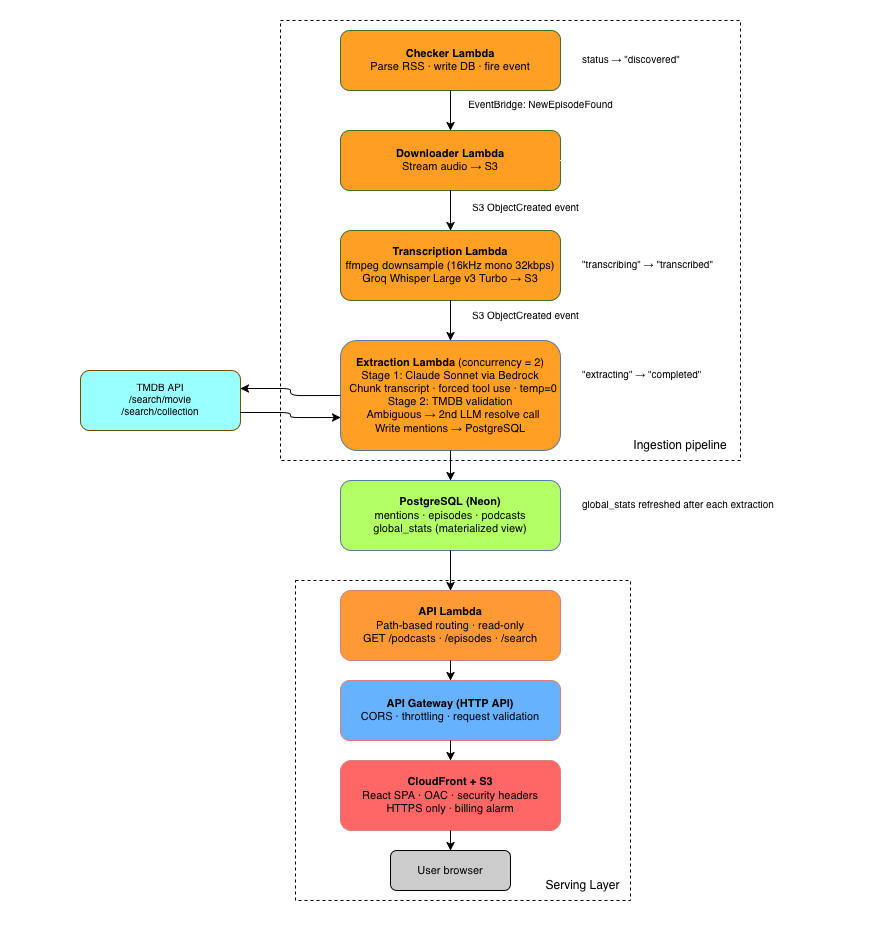

TL;DR: If you don’t want to read my ramblings about the different tradeoffs I had to make, the realtime extraction pipeline looks like this:

I use “realtime” for new episodes as they drop, and “batch” for backfilling older podcast archives more cheaply (with a longer turnaround).

I have a series of lambda functions to check the podcast’s RSS feed, download/upload the audio to S3, transcribe it, and then run extraction and validations with AWS Bedrock and calls to the TMDB API.

The main motivation to use Eventbridge and break up the Lambda functions is scaling. The “checker” lambda function can fire off tens of events in a single run while checking multiple RSS feeds, and each event that it sends off will start a new instance of the next Lambda function in the chain. Since the episodes are eventually sorted by date published, it doesn’t matter the order in which the episodes are processed.

The download and transcription processes could likely be combined into a single function for 90% of the episodes, but they are distinct functions for the purpose of larger episodes. I have already seen large episodes come up often enough to warrant this.

The transcription function is pretty straightforward. It uses Groq’s (NOT the Twitter one) Whisper Large V3 Turbo model to transcribe audio files quickly and at an extremely low cost. Groq is amazing, can’t recommend it enough. After a few tests of longer podcast episodes, I found that splitting the audio into a few chunks and adding a retry was mostly what I needed to handle bulk extraction, including with longer episodes.

The extraction lambda function is the meat of this operation and is what provides the real value to this whole tool. I iterated a lot on this process and ended up manually writing down movie mentions from a few podcast episodes to have a “golden” dataset of podcast movie mentions.

The function splits the transcript into slightly overlapping chunks and then does the extraction in two stages:

- Calls Claude via Bedrock with a detailed prompt, forced tool use, and structured JSON output to find movie mentions. The prompt includes notes about context and examples of right and wrong extractions.

- Searches TMDB (like IMDB but a friendlier API) via API for each mention, divides mentions into franchises or individual films, scores results by title/year match, and fires another quick LLM call to resolve ambiguous mentions.

The function then writes the mentions to a table in a Neon Postgres database, and is served to the user via a simple React SPA using cloudfront, API Gateway, and a lambda function.

Pivots

Extraction Accuracy

The extraction is not 100% accurate, I’d say it’s close to 90%. If you have worked with LLMs, you would know that they sometimes do this thing where they deliberately just don’t follow your instructions. While I was iterating on the pipeline, I found that it would be pretty good but then make some really dumb mistakes here and there. I wanted to make it perfect, but also wanted to move along, so decided I would publish this thing, allow any devoted users to edit the extractions, and come back to it if it made sense in the future. At the very least, using the scoring, the API call, and the second LLM call is a good framework and allows room to tweak and improve in the future.

Transcription Costs

I started the project using AWS Transcribe to transcribe a few podcast episodes, because it made sense at the time. If I can reasonably stay within the walls of AWS for a personal project, I probably will. Also because seeing something like ~$0.024 per minute with a free tier seemed cheap enough, and I wanted to see some quick results and move to the next step. A couple weeks later, my jaw hit the floor when I saw my AWS bill (I won’t tell you how much it was), and it was time to find a new transcription service. Groq is ~$0.0006 per minute, which is a minuscule fraction of the cost of AWS Transcribe, and does the trick just as well.

When I really got going on extracting from hundreds of episodes to fill the backlog, I was hit by a Groq rate limit. My own scaling strength came back to bite me! So I had to throw a concurrency limit on my lambdas and a retry on transcription. It took longer from here on out, but it worked and didn’t cost too much.

Batching

I probably read about it in an AWS release at some point before, but I learned about the practicality and huge cost savings of Bedrock Batch Inference while working on this project. Basically if you can afford to wait 2-24 hours for the results to your LLM API calls, you can pay half the price for the LLM calls. What a deal. For extracting movie mentions from podcasts, which no one even asked for other than me, I definitely don’t need instant results. I hooked up my extraction to send batch jobs to Bedrock and another script to pick up the results and write them to the database. This is not present in the “realtime” pipeline above (hence the name) but is something I ran locally to process a new podcast with hundreds of episodes. I plan to add more podcasts and will use the batch method to process podcast backlogs, and the realtime method to get new individual episodes for podcasts I already track.

Looking Ahead

This is obviously just a side project, and every eager engineer has a long list of things they would do with their side project if they had time. For this one, I plan to make a very robust extraction pipeline. It’s not about finding the perfect method and then using it for every single podcast, but more about acknowledging that I will likely always need to improve this extraction in some way, and therefore be able to run it again and again, with low cost, as I make those improvements. I plan to add other media mentions like books, video games, and music. If someone comes along and finds value in a type of extraction I haven’t even considered yet, I want to be able to support it; another reason I’m investing in making the pipeline robust.

Thank you for reading and feel free to reach out with any questions or comments, or podcasts you want to see added to Deepod!